Testing the Waters of Liquid Cooling with Asetek ServerLSL

Trends in chipset wattage, rack density and the need for sustained un-throttled throughput have made it clear that liquid cooling will be a centerpiece for HPC data centers sooner rather than later. HPC sites in particular are facing issues that purely air cooled servers and cutting edge air cooled data centers will be unable to address for high density HPC clusters.

In some cases, higher performance CPUs and GPUs packed into high density server racks exceed the ability of air flow to provide sufficient cooling. In fact, stopgaps such as hot aisle/cold aisle and rear doors are already hitting limits. Beyond basic cooling requirements, there are subtler limitations inside air cooled data centers. For example, CPU throttling results in reduced overall compute throughput and sustained heat loads decrease reliability. Usages such as high frequency trading depend on nodes that can be overclocked without worrying about throttling or reliability.

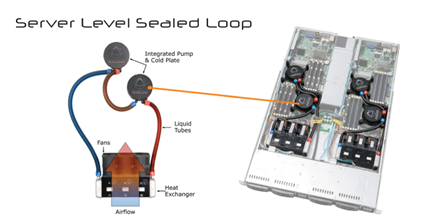

There can also be tactical issues for many who are not yet able to make a full transformation to a complete liquid cooling solution like Asetek RackCDU D2C. Whether budget limitations, fear of liquid in the data center or a current mix of low density and high density racks, it is for these installations that Asetek ServerLSL™ (Server Level Sealed Loop) is ideal. ServerLSL replaces less efficient air coolers and enables data centers to utilize the highest performing CPUs and GPUs without changes to data center infrastructure.

How ServerLSL Works

Asetek ServerLSL is “Liquid Enhanced Air” cooling for server nodes. Hot water is used to pull the heat from high heat flux components. The internal radiators inside the node then dump that heat into the air stream. This approach enables the heat that traditional air heat sinks cannot handle to be transferred into the data center without exceeding the thermal limits of the server’s components. Because the low pressure pumps are contained within each ServerLSL node, individual liquid cooled servers can be installed in the same racks with air cooled servers. The is a key advantaged over centralized pumping systems that require high pressures and whole racks (or multiple racks) of liquid cooled servers to be installed.

ServerLSL can also serve as a transitional technology from air cooling to Asetek’s “free cooling” solution RackCDU D2C. Unlike Asetek RackCDU D2C, ServerLSL still exhausts 100% of its hot air into the data center with the overall data center heat being handled by existing CRACs and chillers. In this way, ServerLSL can serve as a proof point for IT and Facilities management who understand the benefits of liquid cooling but must deal with management fears of liquid cooling in the data center.

ServerLSL uses Asetek’s patented, high efficiency, sealed loop liquid cooling technology to cool the highest wattage, high heat flux CPUs and GPUs. With over 3 million units in the field, Asetek’s proven cold plate and pump design enables the superior cooling characteristics of liquid to capture more heat and transfer it into the air. Unlike competing liquid cooling designs, data center operators never need to touch the liquid or refill Asetek coolers. This same technology is already in service and cooling millions of CPUs and GPUs in PCs, workstations and servers.

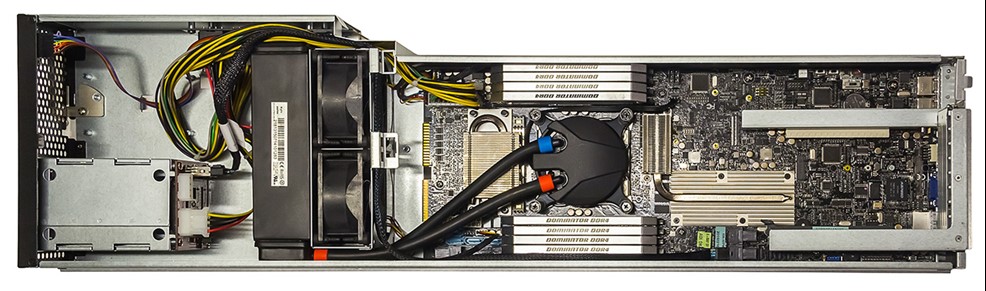

CIARA ORION HF320D-G3 with Asetek ServerLSL

ServerLSL is compatible with 1U and above form factor servers and blades. The low profile Asetek integrated pump / cold plate units have interchangeable mounting mechanisms compatible with Intel and AMD server sockets. Liquid-to-air heat exchangers are available in multiple form factors and are scalable to almost any performance or density requirement.

Asetek’s ServerLSL can be utilized today in CIARA’s ORION HF320D-G3. CIARA is a leader in servers for high-frequency trading and uses liquid cooling to achieve the highest throughput for the demands of that market. Using ServerLSL has helped CIARA reach a new level of ultra high performance, speed and low latency while doubling the density of its previous ORION HF210 and 320 servers.

Back to articles

Back to articles