Green Data Center Heritage Technology

Energy Efficiency

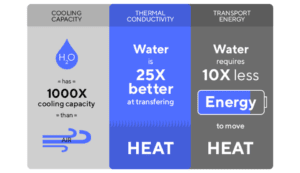

Asetek’s liquid cooling technology provided an energy-efficient alternative to air-cooled data centers.

Asetek’s patented technology could have reduced the energy data centers use for cooling by up to 50%. This has been done by cooling the processors directly instead of cooling the entire rack.

– Reduced data center energy usage for cooling by up to 50% over traditional air-cooling

– Lowered processor temps, resulting in 4% reduction in solution times

– Minimized latency by maximizing cluster interconnect density

Waste Heat Recovery

Asetek technology enabled the greening of data centers through waste heat recovery and reuse for things like district heating networks.

With Asetek technology, Data Center operators are in control. They can adjust outlet temperatures independent from the water in the racks and adjust the pump speed, to maintain consistent return temperatures.

In addition, the energy spent cooling can be recouped and for example provide 60°C water for remote heating or turn the waste hot water into electricity.

Legacy Products

D2C Ingredient Coolers

Direct to Chip (D2C) liquid cooling technology dramatically increases data center density and maximizes sustained CPU throughput.

D2C liquid cooling provides a distributed cooling architecture to address the full range of heat rejection scenarios in air-cooled and liquid-cooled data centers.

Asetek D2C LC Advantages

Benefits of deployment of Asetek D2C liquid cooling solutions included density optimization, energy efficiency, improved power usage effectiveness (PUE), and OpEx savings, while also enabling the greening of data centers through waste heat recovery and re-use.

Shift CapEx to Compute

- Cooling: Purchase inexpensive dry coolers rather than chillers.

Reduce OpEx

- Utilized power-efficient cooling to eliminate chillers and cooling towers

- Reduced server power by eliminating fans

Reliability

- System Level Design and Support for Maximum Performance and Reliability

When defining our liquid cooling platforms, Asetek qualifies all materials for compatibility while ensuring proper chemical balances within the system. - Distributed Pumping Advantages

Compared to centralized pumping, Asetek D2C solutions enable distributed pumping renowned for being extremely reliable, given benefits of constant immersion in fluid.